Don't give the intern root access (or a Mac Mini)

To understand the mess that happened this week in AI, you have to understand what OpenClaw is.

It started as the open-source answer to the proprietary "Computer Use" agents. The promise was intoxicating: a self-hosted, autonomous agent that could navigate the web, click buttons, and use a terminal just like a human. It was the dream of breaking free from the walled gardens of OpenAI or Anthropic, giving you a worker that lived on your infrastructure.

But as with every rapid technological adoption, the "cool factor" outpaced the "security factor."

This week has been a bloodbath. We are seeing hundreds of OpenClaw instances exposed openly on the internet. Credential dumps. API keys in plain text. This means third parties can do basically whatever they want with your private data.

The fundamental misunderstanding isn't about code; it's about architecture. You think you are the only input for your agent. You aren’t. An agent is an ingestion engine. Every email it reads, every webpage it visits - that is content written by someone else.

If a random person sends you a DM, that message is no longer just text. It is an input for a system that often has shell access to your machine. This is "Indirect Prompt Injection," effectively turning your inbox into a command line for strangers.

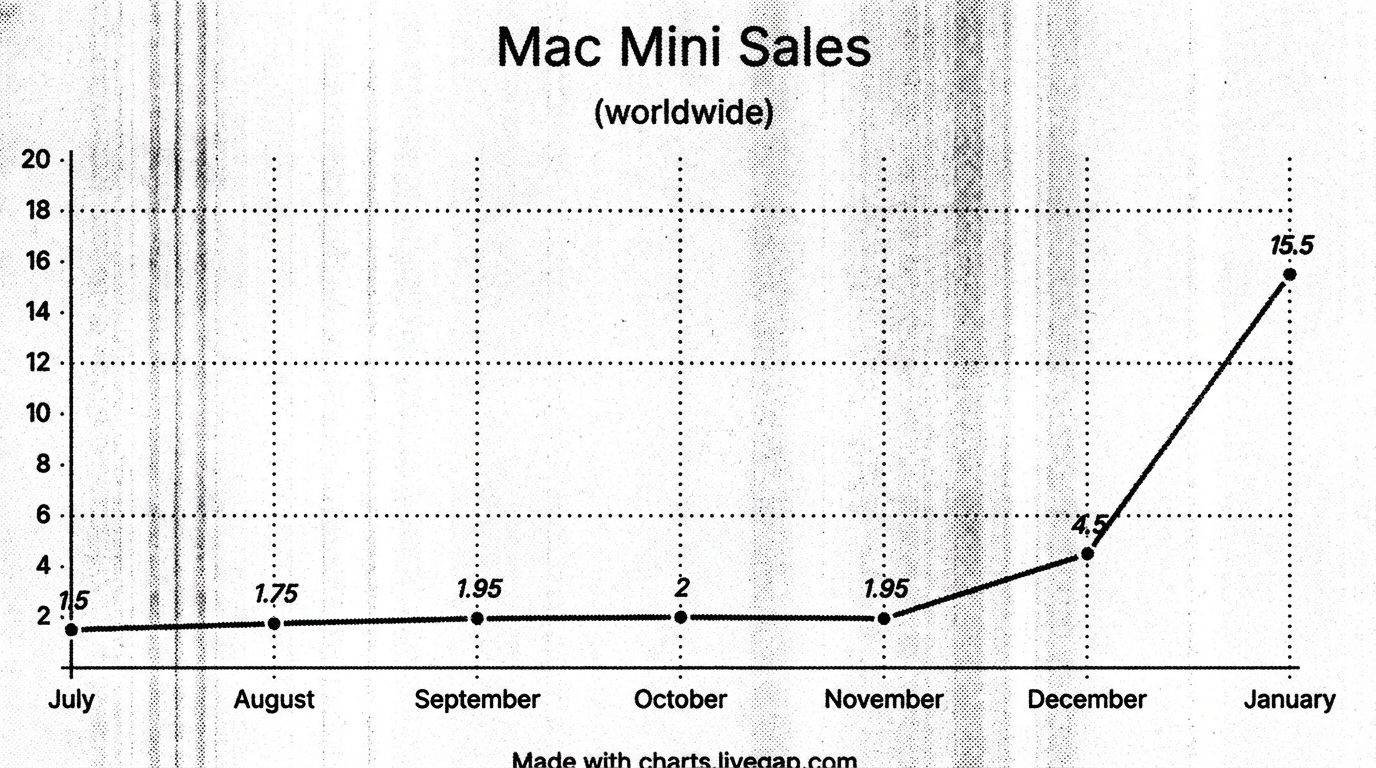

The Mac Mini Epidemic

There is a strange epidemic right now of people buying Mac Minis just to run a Python script.

You do not need an €800+ consumer machine sitting on your desk to make API calls. It’s a waste of silicon and electricity. An agent should be a headless background process, not a desktop pet.

I run my personal stack on a Raspberry Pi 5. It sits quietly, consumes as much power as a lightbulb, and does exactly what the Mac Mini does: it pushes JSON back and forth. If you don't want hardware, use a €5 VPS or the AWS free tier. But please, stop buying desktop computers for background workers.

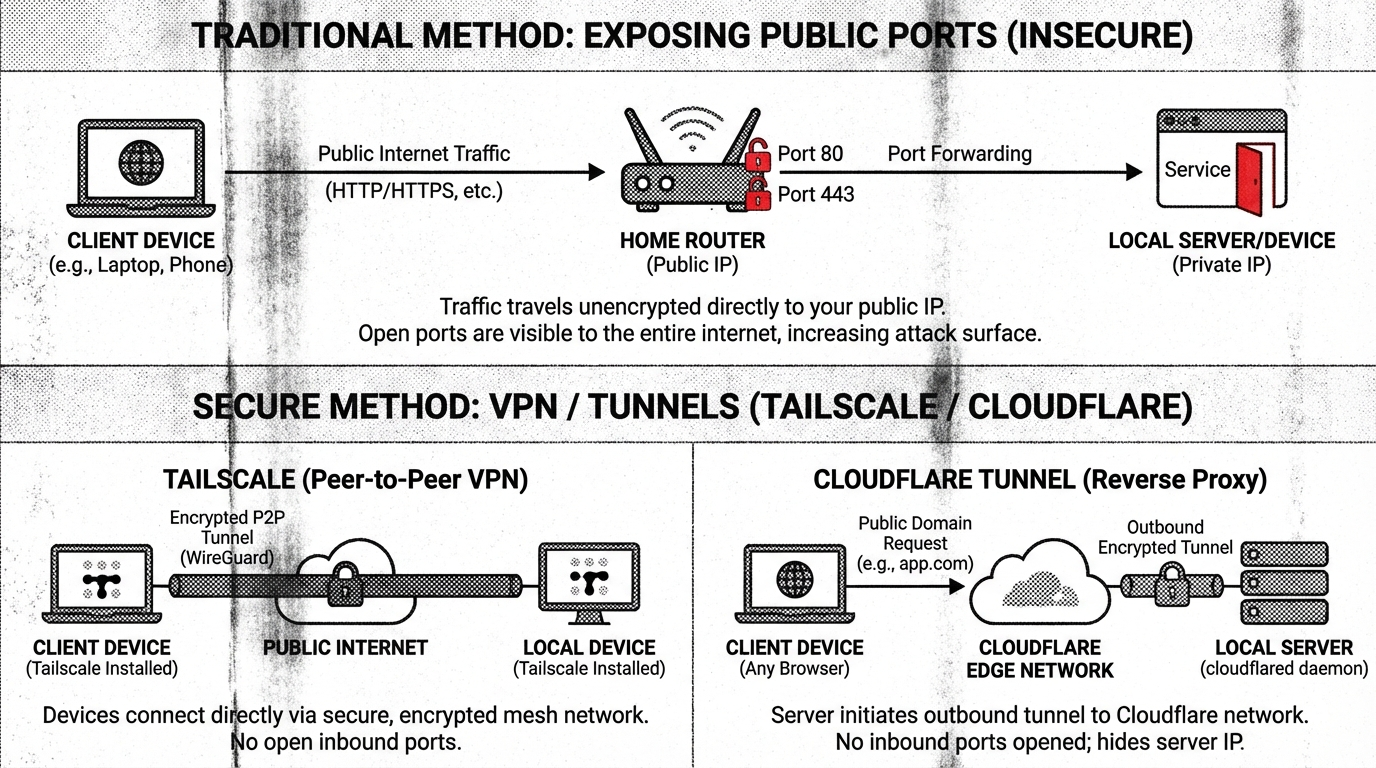

The Architecture: Identity and Isolation

If you are going to deploy this, treat the agent like an external intern. You wouldn't give a summer intern your unlocked laptop and your primary Google credentials. You shouldn't give them to your agent, either.

- Identity: The agent needs its own Google account, its own calendar, and its own set of permissions for each service it has access to. If it gets compromised, you burn the agent's identity, not yours.

- Segmentation: Do not run this on your bare metal OS. Use Docker.

- Network: Never expose ports to the public internet.

- Tailscale is the easiest way to access it securely.

- Cloudflare Tunnels are the professional choice if you need granular control without opening ports on your router.

Human in the Loop (HITL)

I build automated systems for companies for a living. The complexity isn't just in making the system work, it's in making it safe.

We often connect these agents to RAG (Retrieval-Augmented Generation) systems and massive knowledge bases. But no matter how good the context is, the output of an LLM is probabilistic, not deterministic.

That is why you need a Human in the Loop. You cannot blindly automate critical decisions based on a system that predicts the next token based on statistical likelihood.

There is a deeper issue here regarding the "Black Box" nature of AI and how it degrades our critical thinking skills. We trust the output because it looks confident, even when the logic path is hidden. That is a topic for another note.

For now, just lock the doors. Secure your network, segment permissions, and spend your money on the hardware wisely.

And if you are deploying this for your business, choose your AI consultant wisely. You are paying for the architecture, not the installation script.